Pauline Gerard, Deputy Director, IISD-ELA and Corporate Secretary, talked to our BIT, BTM, InfoSec, and PTEC students about the challenges the IISD is working to resolve in protecting and cleaning up our fresh water resources and species right here in Manitoba. Lake Winnipeg, which is the 11th largest fresh water lake in the world, is under threat due to excessive pollutants entering the watershed. The lake also serves as the sole source of potable water for many northern communities and supplies a significant commercial fishing stock. Gerard called for students’ help in assisting the organization in signing up to develop technology-backed ideas and solutions to stop further degradation of our precious fresh water resources.

Gerard guided students through the process of developing sustainable ideas by working on a common challenge affecting the agriculture sector today: providing agriculture producers with cost-effective solutions for managing drainage and the climate. The students were split into groups to discuss ideas around how the problem could be solved. One student from each group shared their idea to the audience. Ideas involved Internet-connected sensors, apps, and more.

The five challenges the IISD is working on for Lake Winnipeg include:

- Providing agricultural producers with cost-effective solutions for water and land management

- Assessing fish populations and health using non-invasive techniques

- Preventing microplastics from entering the lake

- Enabling local testing of drinking water quality in remote northern communities

- Financing sustainable development initiatives by connecting individual and group funding sources

The AquaHacking Challenge is an 8-month long competition for the best ideas, connecting teams of innovative people with mentors from industry and workshops to create innovative and sustainable solutions. Technology-minded youth between the ages of 18 and 35 are encouraged to register to be part of a solution team for this competition, which starts in February with winners declared in October. Winners will receive part of a $50,000 prize pool to fund further development of their solutions.

To learn more about the AquaHacking 2020 Challenge for Lake Winnipeg and how to participate, visit https://bit.ly/HackLakeWpg or stop by the IISD booth on January 31st during the DisruptED Conference at the RBC Convention Centre.

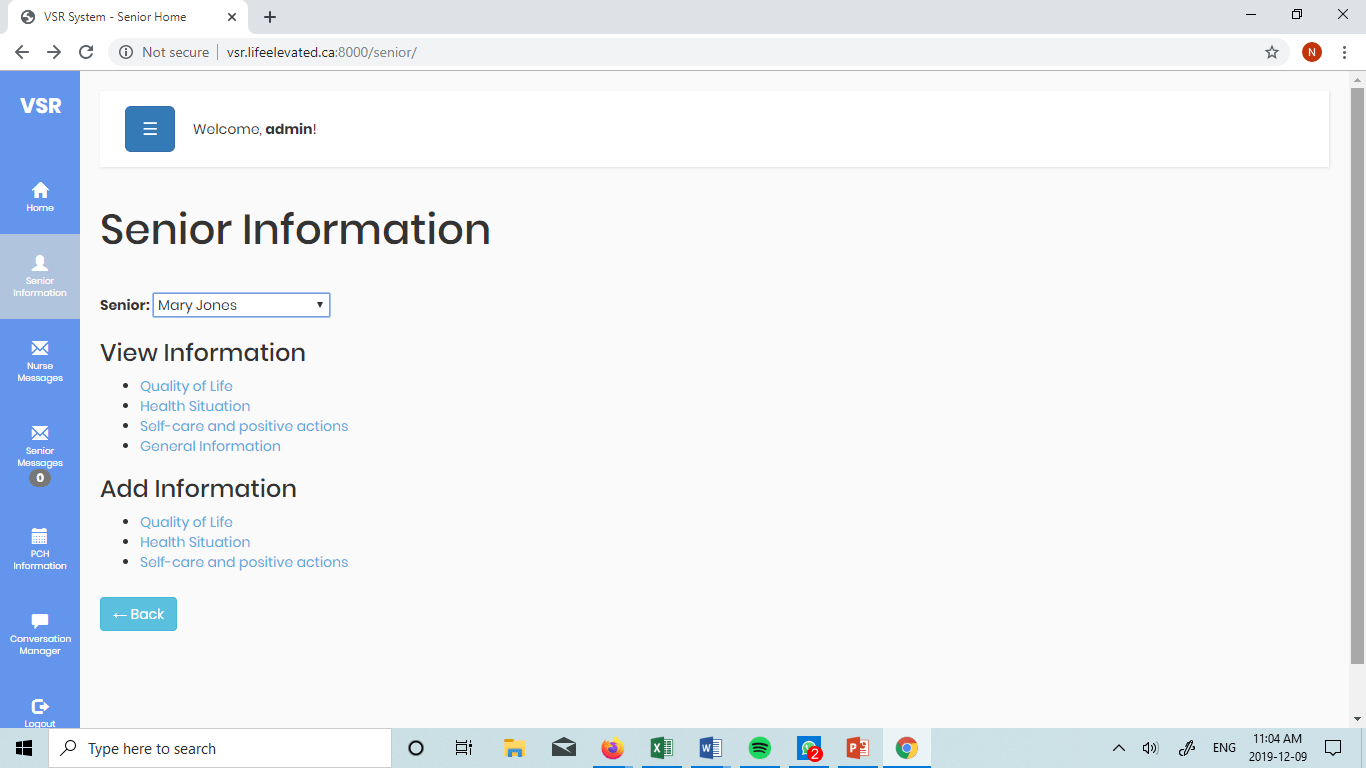

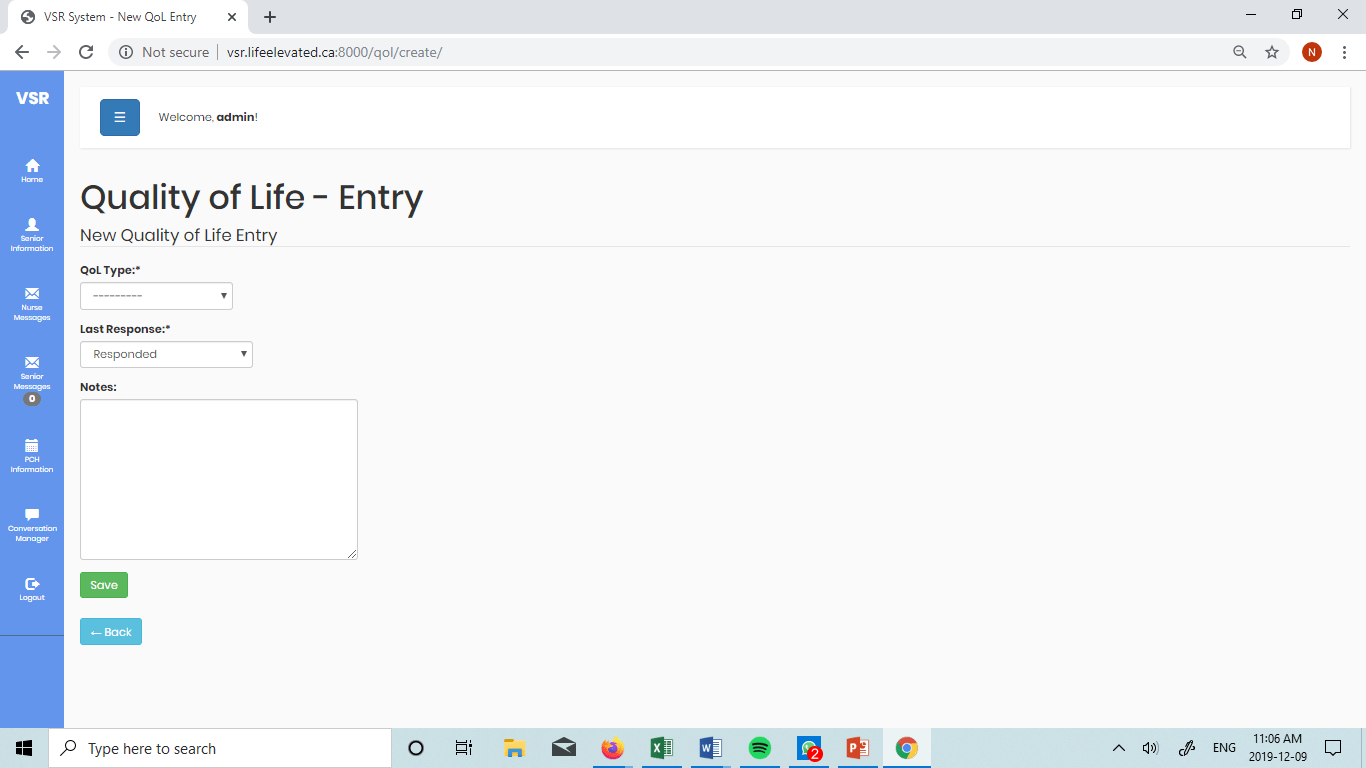

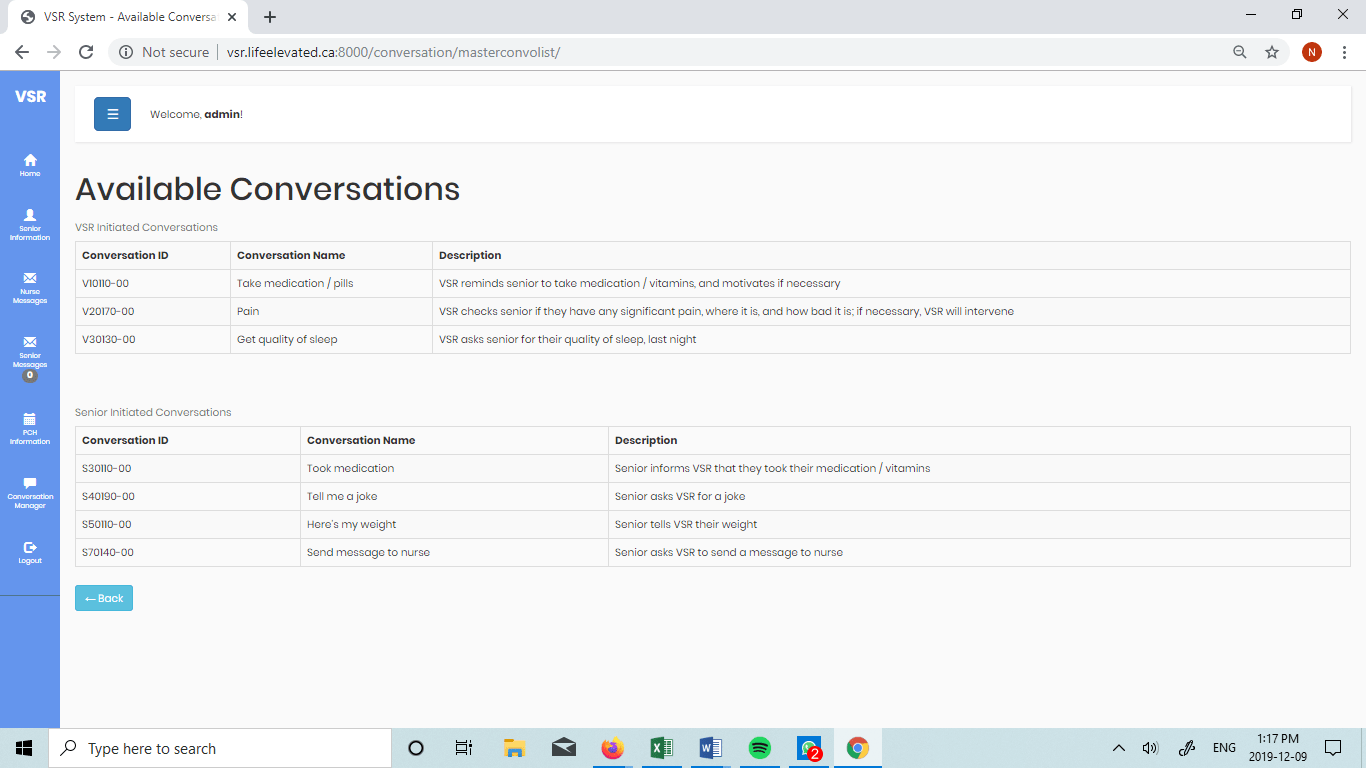

]]>Millions of seniors who require personal care struggle with maintaining their independence, creating strain on themselves, caregivers, and nurses. Life Elevated was created to address the issue. In collaboration with students at the ACE Project Space, the firm is building a practical electronic assistive service to automate tasks that can be performed by a computer.

Building an application for an assistive device

The student team assigned to the Life Elevated project developed a database management system and a website to complement an assistive voice-activated device called a Virtual Senior Roommate or avatar that the firm had developed. The students extracted health, quality of life, and general activity information from the avatar and were able to present the data in a manner that nurses could analyze. In addition, the students learned how to a Cura Lulzbot to design and print a 3D case for the avatar to allow for easier transport.

Deliverables

The Life Elevated team completed the following deliverables for the project during the fall term at the ACE Project Space:

- Database system to support the solution

- Website with information collected from the avatar, seniors, and nurses

- 3D designed and printed cases for the avatar.

What our students are saying

“I learned how to work effectively in a team, how to use Python/Django and git, and how to prioritize tasks. I learned team building by actively participating in group discussions and voicing my own opinions on matters at hand.” – Simon Tran

“Having this 4-month experience, it was an opportunity for me to enhance my soft skills, such as organizational, leadership, communication, and some technical skills as well.” – Nelson Munoz

“In my experience in the ACE Project Space, I’ve learned to work in a team, and by that, I mean I learned to accept other people’s opinions. There are a lot of differences in the way people do things. I self-learned new technologies and applied what I already knew to these technologies to further enhance my skills in development.” – Jose Jacap

Technologies used

- Python

- PyCharm

- Django

- PostgreSQL

- Balsamiq Mockups 3

- Drawio

- Cura-lulzbot Software

]]>

Brent Wennekes, Director of Business Development (Manitoba) at Mitacs, described how their Accelerate program pairs entrepreneurs and companies working across all sectors of the economy with student research opportunities. Mr. Wennekes provided details about the funding model and the application process, which include a $7,500 contribution from a business in exchange for a $15,000 research award from Mitacs to support a research student intern for four months. Mitacs funding has spearheaded many of the four-month projects delivered at the ACE Project Space.

Mitacs funding recipient and CEO of ioAirFlow, Matt Schaubroeck, described his experience of having leveraged Mitacs funding while working a full-time job to kickstart his new venture. Mr. Schaubroek’s company is building an AI-supported solution using a network of temperature sensors to provide building owners and tenants with the data they need to increase energy efficiency. The research student embedded at the ACE Project Space as part of the ioAirFlow project was integral in building a marketable solution that won stage time at the Falling Walls Lab pitch contest in Berlin.

Stephen Lawrence, ACE Project Space Coordinator, shared the opportunity and process that lend to entrepreneurs the application development skills of fourth term students at the ACE Project Space with support from Mitacs. Mr. Lawrence described how the mutually beneficial relationship provides students with valuable real life project experience while providing entrepreneurs with the ability to bring their ideas to fruition.

To learn more about how to bring your business ideas to life at the ACE Project Space, please contact Stephen Lawrence, ACE Project Space Coordinator or visit our ACE Project Space web site.

]]>

Project Term: Winter 2019

Establishments such as restaurants, pubs, and hotels often have TVs set up to provide customers with live news and sports entertainment. Taiv was created to replace broadcasters’ ads with targeted ads from the establishment. The original solution did not optimize ad placement during commercial breaks.

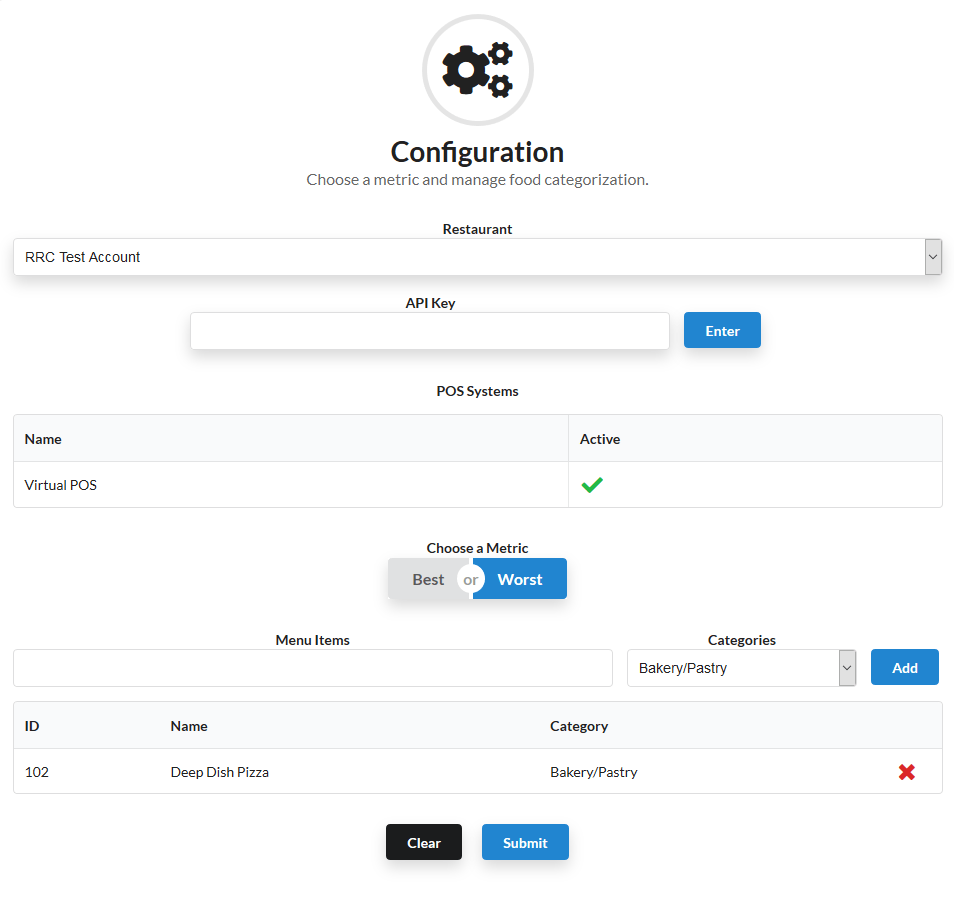

BIT and BTM students built a solution that integrates with an establishment’s point-of-sale (POS) data to fetch and analyze real-time purchases to improve ad placement.

Background

Taiv is a software and hardware product that detects commercials during a TV sports or other live sessions broadcasted at a restaurant or other establishment and replaces them with preferred ads to help boost sales. This product prioritizes menu item ads based on consumer purchase data. Ads are selected based on analysis results of real-time purchase data from the POS machines in use within the establishment. In addition, establishment owners and managers have the ability to create and upload their own custom ads.

The commercial break detection functionality already built by the client didn’t have the ability to know which menu item to be displayed as an ad. BIT and BTM students where tasked with building the missing functionality.

Building the solution

To create the missing functionality for commercial breaks, the students completed the following deliverables:

- Data scraping – To simulate how the software was going work, data was needed to perform machine learning. The students scraped content from the web and built an effective data set.

- Statistical analysis – To determine which items to sell during a given commercial break, the students performed regression analysis on the POS sales data to identify trends. The resulting analysis enabled the students to organize the menu items into best seller and worst seller categorizes.

- Menu item scoring system – Using the regression analysis data, the menu items received an ad placement score based on timing and associated sales. The menu items with the highest scores were selected for ad placement.

- Configuration file – To store the criteria determining when a menu item was in either the best seller or worst seller categories, the students built a configuration file populated by the Web UI.

- Web UI – To capture the establishment user preferences and populate the associated configuration file, the students built an associated web UI.

- Food categorization – To measure the performance of each food category, food categorization was added to the web UI for users.

What our students are saying

“Coming in with a technical background, I knew there is still a lot of management skills to be learned. I am indeed grateful for this opportunity. I also had the opportunity of learning a new Web framework (React.js) which was used to develop our project. As a BTM student in the Space, I have learned the industry best-practices in terms of project management and business analysis. My teammates has been really helpful for the success of this project. Special thanks to Justine, Alex, Dawood.” – Mauricio Usatai

“I am very grateful for this experience. This is due to the amount of growth I have experienced within a short period of 4 months. So far, I have learned how to write program python and react.js programs. I have also learned a great deal of people management, as well as business analysis and project management. My teammates has been really awesome in sharing their knowledge with whenever I hit an obstacle. Special thanks to Justine, Mauricio, and Alex.” – Dawood Abdulsalam

“From the very beginning I already knew that I wanted to be a web developer and it is a very good opportunity that I was able to learn React. The things I’ve learned from all the people I work with here in space will be very beneficial for my career. I am very grateful for Mauricio, Dawood and Justine who help me along the way, this would all not be possible without them.” – Alexander Fernandez

“At the beginning of this journey, I didn’t have a clear vision of the end result. Coming in with a programming experience I got from the college, I was able to utilize the skills to contribute to the success of this project. Although, I had to learn a new programming language (React.js) which seemed difficult at first; I am grateful that I learned it, and I am now more confident in my future as a web developer than I was four months ago. I am also very grateful to my teammates (Dawood, Alex, and Mauricio) for their contribution to this project.” – Justine Dancel

View an example of how TAIV works: https://taiv.tv

Technologies used

- React.js

- Firebase

- Google Cloud

- Node.js

- Python

- HTML

- CSS

- BOOTSTRAP

- Android Studio

- React Native

- Machine Learning (Natural Language Processing)

TAIV configuration

This Natural Language Processing (NLP) project was a research/survey of NLP resources and algorithms that exist that can be used towards a commercial application one of our ACE Project Space partners was working on.

This project was an interesting foray into Machine Learning that the faculty at the ACE Project Space felt was well suited to a Business Technology Management (BTM) student in the space. We were very fortunate that one of our students was pursuing diplomas in both Business Information Technology (BIT) and BTM. Having strong skills in programming and analysis, he was really able to make the most of this project. The general goal of the project was to explore how NLP can be used to determine user intentions based on their use of language.

Starting out on the project, our student didn’t know what NLP was and had no prior knowledge of Python. Luckily, our research coordinator, Elsanussi Mneina, was versed in Machine Learning, specializing in linguistics and NLP. After an introduction between our coordinator and our student, our student felt supported on this new and uncertain project. Elsanussi was instrumental in pointing our students towards resources, such as the NLTK library, and shared some of his own experiences related to Data Science and NLP.

In the early weeks, our student was able to teach himself Python, relying on his programming skills from BIT and applying principles of Object Oriented programming learned in the BIT and BTM programs. While picking up Python, he used resources from BeautifulSoup, Tweeter API, and JSON to understand reading and fetching data from various sources.

Our student was able to identify and explore the area of Sentiment Analysis as a way to classifying text, rating a sentence, paragraph and words whether if it was negative, neutral, positive, and so on. Using NLTK our student explored tokenizing text, identifying stop words (words that are irrelevant to the sentence), and determining which parts of speech held the most importance in a sentence. He also explored and tested similar classifiers and their suitability to the project scope, including NLTK SentiWordNet, NLTK VADER Sentiment Intensity Analyzer, NTLK Sentiment Analyzer and Textblob Sentiment Analysis. His goal was to determine if any of the classifiers were customizable if they could be used to classify text, and if they could be trained.

Technologies used:

NLTK, TextBlob, BeautifulSoup4, Pandas, Jupyter, JSON, Unicode, Notepad++, python 3.5 (32bits)

This project presents its own challenges, not only is EEG data notoriously difficult to interpret, but there are more than 20 different signals that vary over time in many different ways.

By using machine learning algorithms on the EEG data, we intend to be able to predict feelings from the EEG data. In order to do so, much work must be done wading through the data and deciding which parts of the data are relevant to predicting feelings. Different ways of transforming the data, and summarizing the data must be tried before a machine learning algorithm can be used to make predictions.

The knowledge gained by building a successful algorithm could be used to more accurately gage a person’s feelings: using a computer; watching a movie; or reacting to commercials. This may have practical applications in consumer focus groups or even in the field of psychiatry.

To find out more about DEAP, their dataset can be found at the following link: http://www.eecs.qmul.ac.uk/mmv/datasets/deap/

]]>Jonee and Haider have been working together to get a facial recognition program working on a Raspberry Pi. Some of the areas that are in progress are finding a way to classify intermediate stages of recognition so the computer will know to look at subsets when new pictures come in.

Some of the present challenges include considering demographics that aren’t well recognized by the libraries in use, for example: children’s faces.

One of the project goals is to come up with a smart algorithm that works with a fast-access database in order to retrieve information efficiently. Ultimately, there are plans to integrate this face recognition technology into a Smart Desk. Technologies used include FP module, OpenCV, and Python.

Please click here to read more on our Smart Desk Project

]]>We have partnered with a local hospital and anticipate that this new system will replace the current system they are using which operates as a simple iPad game that patients play while operating their training wheelchair. The limitations of the existing system is that iPad gameplay is two-dimensional and is unable to capture important three-dimensional aspects of operating a wheelchair. Furthermore, their current sensors operate unreliably.

Thus far, our research student, Toan Thanh Le, has researched what kinds of new sensors we could instead mount on the wheelchair. Our next goals are to develop a VR experience which might be able to scan a local environment up in order to prepare a training environment.

Our research assistant, Matthias Y, has so far evaluated and compared suitable AR and VR technologies and the team has shortlisted a brand and model we might like to try out.

It is still early days for this project, but the coming months hold much excitement and anticipation as our team starts to test out ideas and prove concepts.

]]>Working with Python, he has been working on using Machine learning to train the system to recognize human names, english and international, compared to extraneous data. He is also exploring sentiment analysis there the computer could look at data such as movie reviews to deduce if a movie was ‘good’ or ‘bad’ based on its review and the frequency of certain words used in the review.

As this project is in early stages, we anticipate that it will develop into a conversational user interface that could enhance a desk user’s experience.

]]>The physical desk itself will behave as a portable computer card that is designed to fold down and nest into a smaller format. Technologies that will be embedded into the Smart Desk include a facial recognition system, a conversational user interface built on Natural Language Processing, and ultrahaptic controls. The prototype of the physical cart is presently in the works and we anticipate it this structure will be complete around in mid-summer 2018.

End goals for this project means that a user could be identified when they occupy the desk space, in turn the desk would customize the environment and settings to the user’s preferences. The user would be able to interact with their environment not only by traditional mouse and keyboard, but also through conversational features and ultrahaptic controls. We anticipate being able to station one or more smart desks to use in the future RRC Innovation Centre.

]]>